$ cat bio.md

I’m Matt, a final-year PhD candidate at Princeton University working with Prof. Peter Melchior in the Dynamical Learning Lab. My research focuses on stochastic optimization, representation learning, and dynamical systems, with the goal of using principles from physics to build more capable and trainable models.

Prior to Princeton, I developed large-scale simulations of complex physical systems at the Australian National University.

> I am on the job market for 2027.

Long-term vision

To design architectures and optimization methods that enable models to develop a deep understanding of complex dynamical systems, enabling new scientific discoveries and forming the foundations for increasingly general, physics-inspired intelligence.

$ tail -n 3 news.log

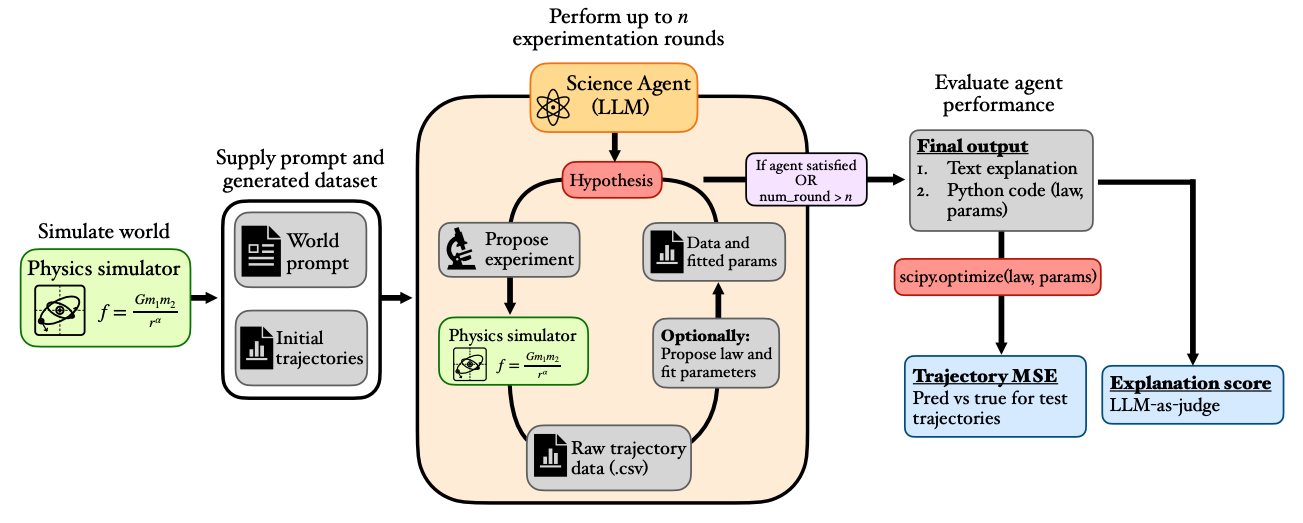

| May 27, 2026 | Excited to release DiscoverPhysics, a benchmark for outside the box scientific thinking. Paper here: arxiv.org/abs/2605.26087 |

|---|---|

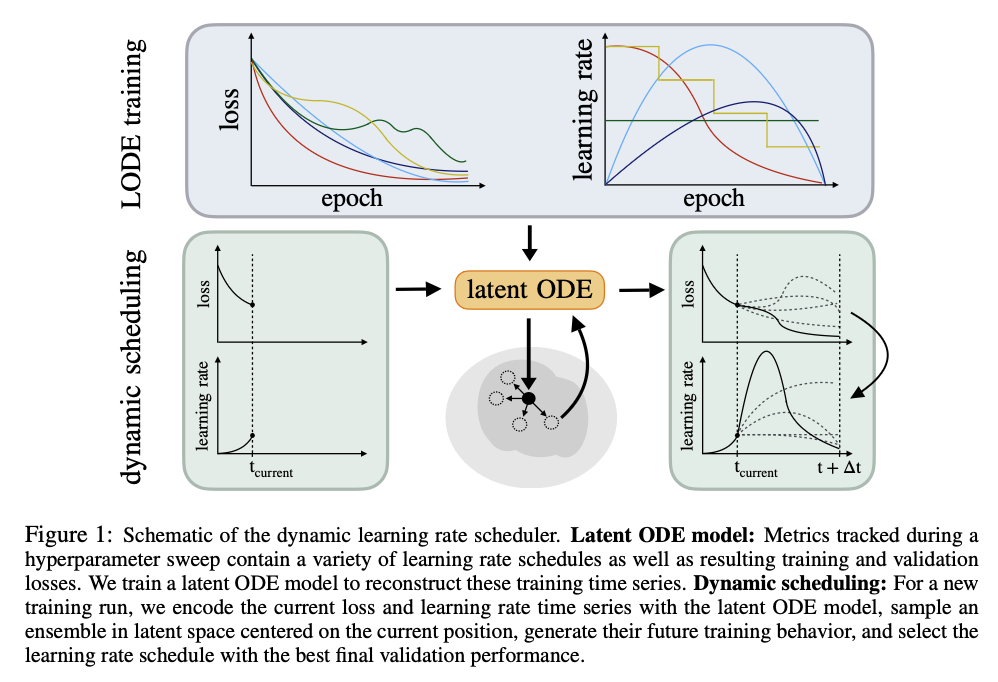

| Oct 01, 2025 | Excited to share our latent paper Dynamics of learning: Generating schedules from Latent ODEs, see my blog post |

| Sep 23, 2025 | Congratulations to Columbia undergraduate Angelina Yan on the acceptance of her NeurIPS workshop paper “A novel approach to classification of ECG arrhythmia types with latent ODEs” |

$ grep --author="Wiemann" papers.bib | head -4

* previously published as Matt L. Sampson

-

DiscoverPhysics: Benchmarking LLMs for Out-of-the-Box Scientific ThinkingarXiv preprint arXiv:2605.26087, 2026

DiscoverPhysics: Benchmarking LLMs for Out-of-the-Box Scientific ThinkingarXiv preprint arXiv:2605.26087, 2026 -

-

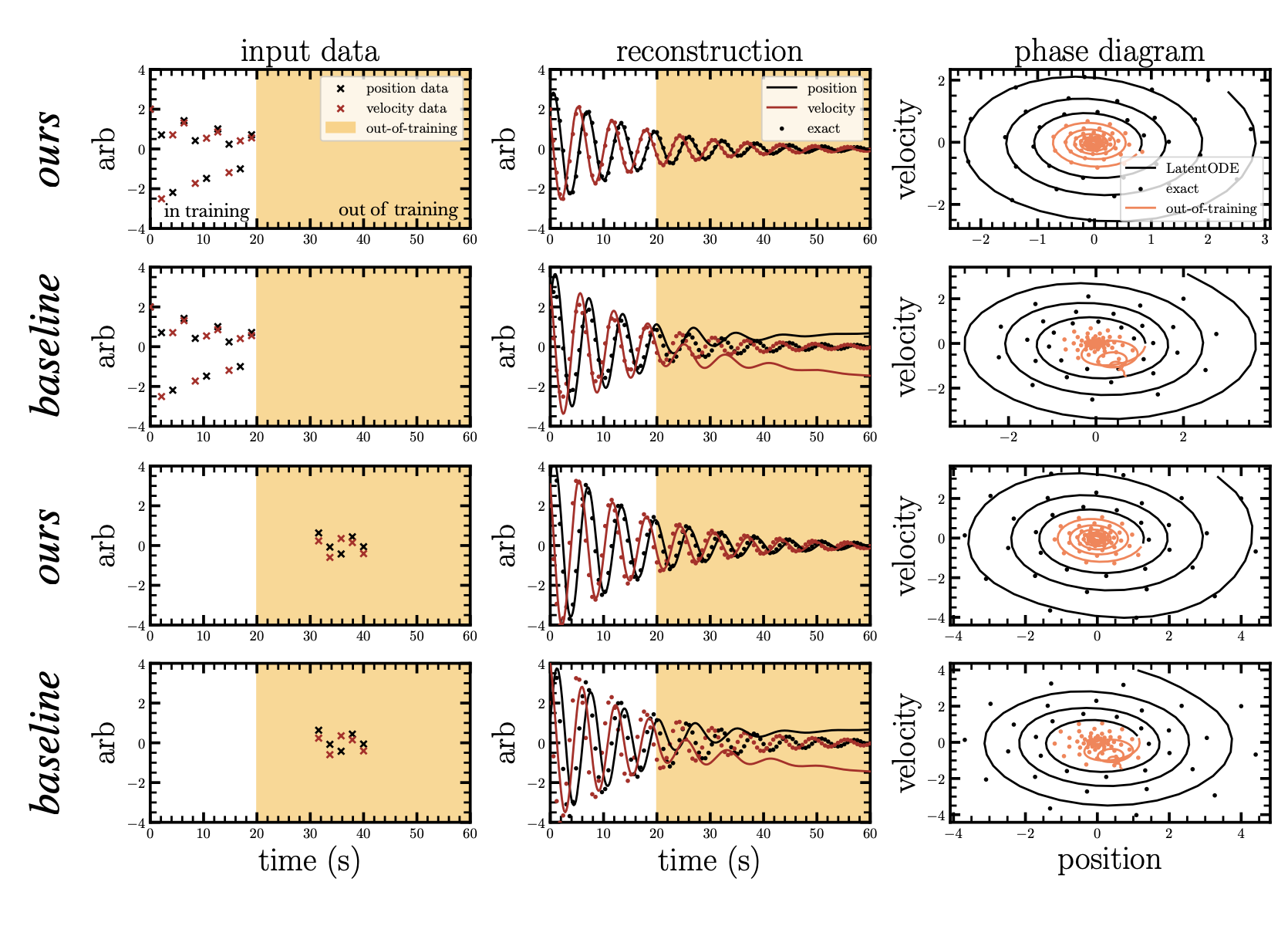

Path-minimizing latent ODEs for improved extrapolation and inferenceMachine Learning: Science and Technology, Jun 2025

Path-minimizing latent ODEs for improved extrapolation and inferenceMachine Learning: Science and Technology, Jun 2025 -

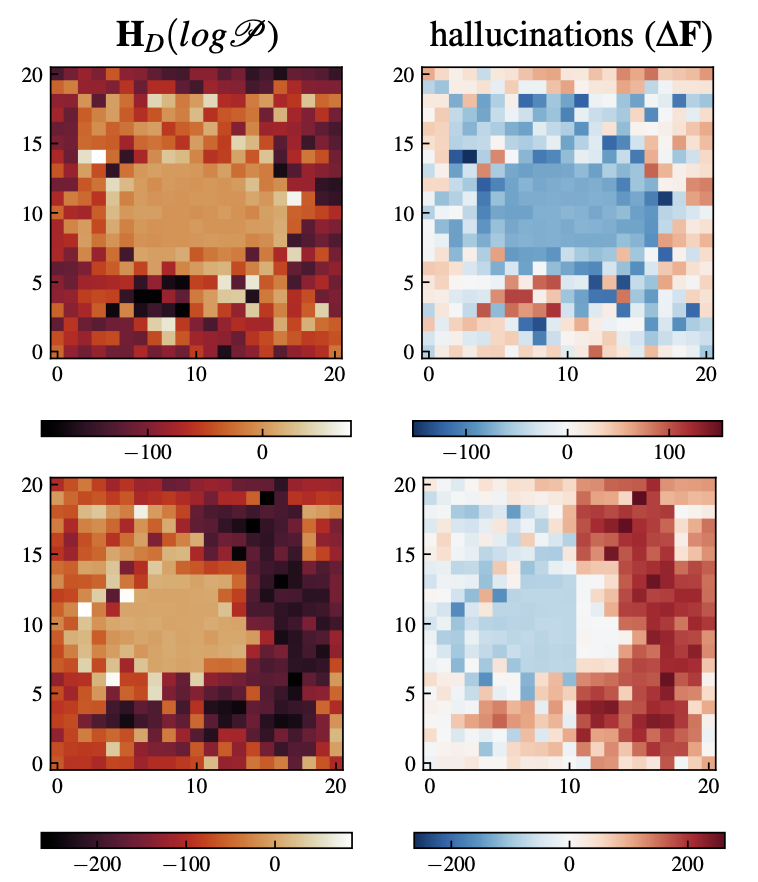

Spotting Hallucinations in Inverse Problems with Data-Driven PriorsIn ICML ML4Astrophysics Workshop (Oral), Jul 2023

Spotting Hallucinations in Inverse Problems with Data-Driven PriorsIn ICML ML4Astrophysics Workshop (Oral), Jul 2023

$ cat visitors.map